Instructions to use IPADS-SAI/MobiMind-Mixed-7B with libraries, inference providers, notebooks, and local apps. Follow these links to get started.

- Libraries

- Transformers

How to use IPADS-SAI/MobiMind-Mixed-7B with Transformers:

# Use a pipeline as a high-level helper from transformers import pipeline pipe = pipeline("image-text-to-text", model="IPADS-SAI/MobiMind-Mixed-7B") messages = [ { "role": "user", "content": [ {"type": "image", "url": "https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/p-blog/candy.JPG"}, {"type": "text", "text": "What animal is on the candy?"} ] }, ] pipe(text=messages)# Load model directly from transformers import AutoProcessor, AutoModelForImageTextToText processor = AutoProcessor.from_pretrained("IPADS-SAI/MobiMind-Mixed-7B") model = AutoModelForImageTextToText.from_pretrained("IPADS-SAI/MobiMind-Mixed-7B") messages = [ { "role": "user", "content": [ {"type": "image", "url": "https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/p-blog/candy.JPG"}, {"type": "text", "text": "What animal is on the candy?"} ] }, ] inputs = processor.apply_chat_template( messages, add_generation_prompt=True, tokenize=True, return_dict=True, return_tensors="pt", ).to(model.device) outputs = model.generate(**inputs, max_new_tokens=40) print(processor.decode(outputs[0][inputs["input_ids"].shape[-1]:])) - Notebooks

- Google Colab

- Kaggle

- Local Apps

- vLLM

How to use IPADS-SAI/MobiMind-Mixed-7B with vLLM:

Install from pip and serve model

# Install vLLM from pip: pip install vllm # Start the vLLM server: vllm serve "IPADS-SAI/MobiMind-Mixed-7B" # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:8000/v1/chat/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "IPADS-SAI/MobiMind-Mixed-7B", "messages": [ { "role": "user", "content": [ { "type": "text", "text": "Describe this image in one sentence." }, { "type": "image_url", "image_url": { "url": "https://cdn.britannica.com/61/93061-050-99147DCE/Statue-of-Liberty-Island-New-York-Bay.jpg" } } ] } ] }'Use Docker

docker model run hf.co/IPADS-SAI/MobiMind-Mixed-7B

- SGLang

How to use IPADS-SAI/MobiMind-Mixed-7B with SGLang:

Install from pip and serve model

# Install SGLang from pip: pip install sglang # Start the SGLang server: python3 -m sglang.launch_server \ --model-path "IPADS-SAI/MobiMind-Mixed-7B" \ --host 0.0.0.0 \ --port 30000 # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:30000/v1/chat/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "IPADS-SAI/MobiMind-Mixed-7B", "messages": [ { "role": "user", "content": [ { "type": "text", "text": "Describe this image in one sentence." }, { "type": "image_url", "image_url": { "url": "https://cdn.britannica.com/61/93061-050-99147DCE/Statue-of-Liberty-Island-New-York-Bay.jpg" } } ] } ] }'Use Docker images

docker run --gpus all \ --shm-size 32g \ -p 30000:30000 \ -v ~/.cache/huggingface:/root/.cache/huggingface \ --env "HF_TOKEN=<secret>" \ --ipc=host \ lmsysorg/sglang:latest \ python3 -m sglang.launch_server \ --model-path "IPADS-SAI/MobiMind-Mixed-7B" \ --host 0.0.0.0 \ --port 30000 # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:30000/v1/chat/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "IPADS-SAI/MobiMind-Mixed-7B", "messages": [ { "role": "user", "content": [ { "type": "text", "text": "Describe this image in one sentence." }, { "type": "image_url", "image_url": { "url": "https://cdn.britannica.com/61/93061-050-99147DCE/Statue-of-Liberty-Island-New-York-Bay.jpg" } } ] } ] }' - Docker Model Runner

How to use IPADS-SAI/MobiMind-Mixed-7B with Docker Model Runner:

docker model run hf.co/IPADS-SAI/MobiMind-Mixed-7B

MobiMind-Mixed-7B Model

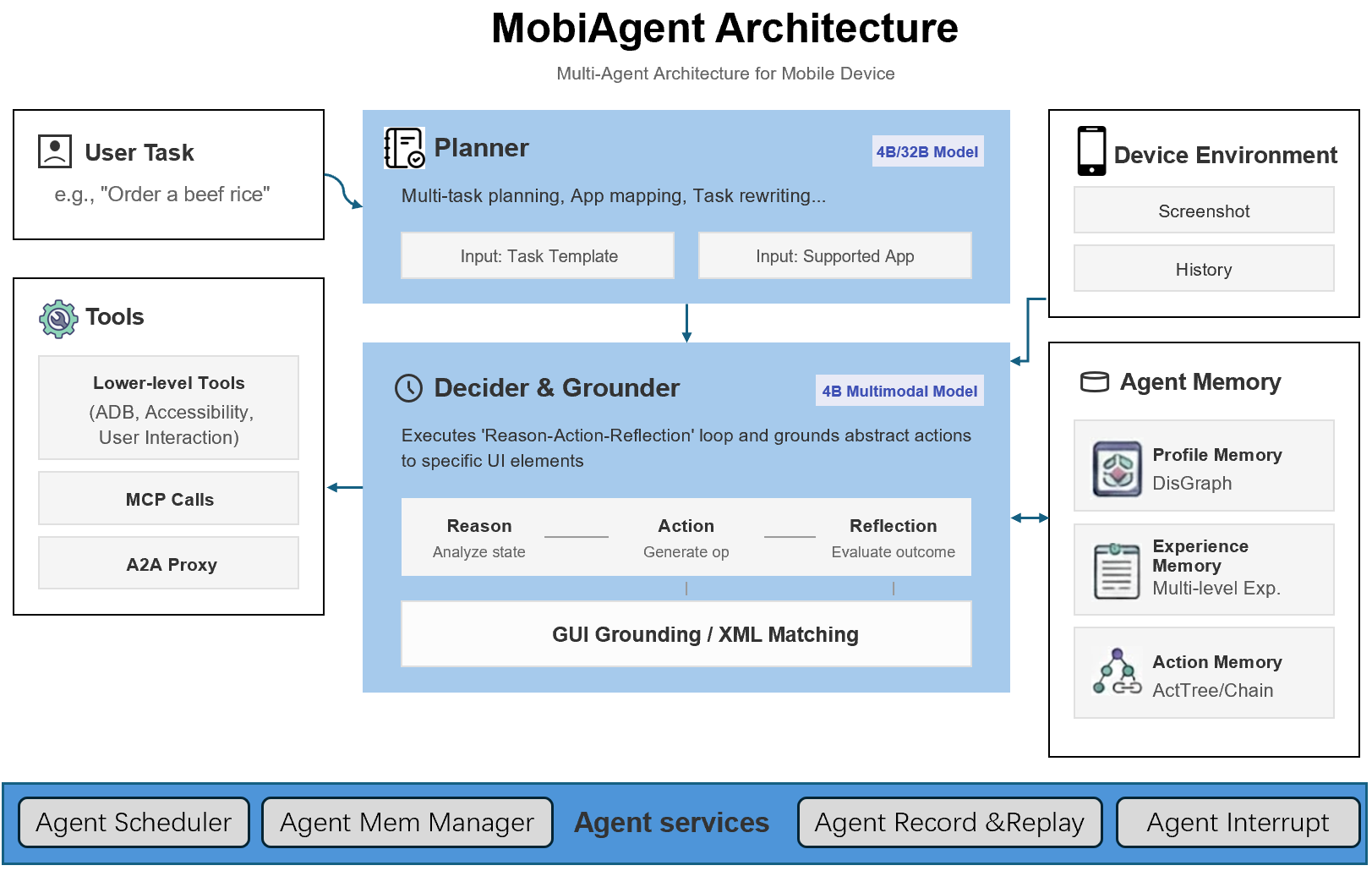

This is the Mixed Model of MobiAgent with 7B parameters, having the abilities of both the MobiMind-Decider and the MobiMind-Grounder presented in the paper MobiAgent: A Systematic Framework for Customizable Mobile Agents.

Paper Abstract

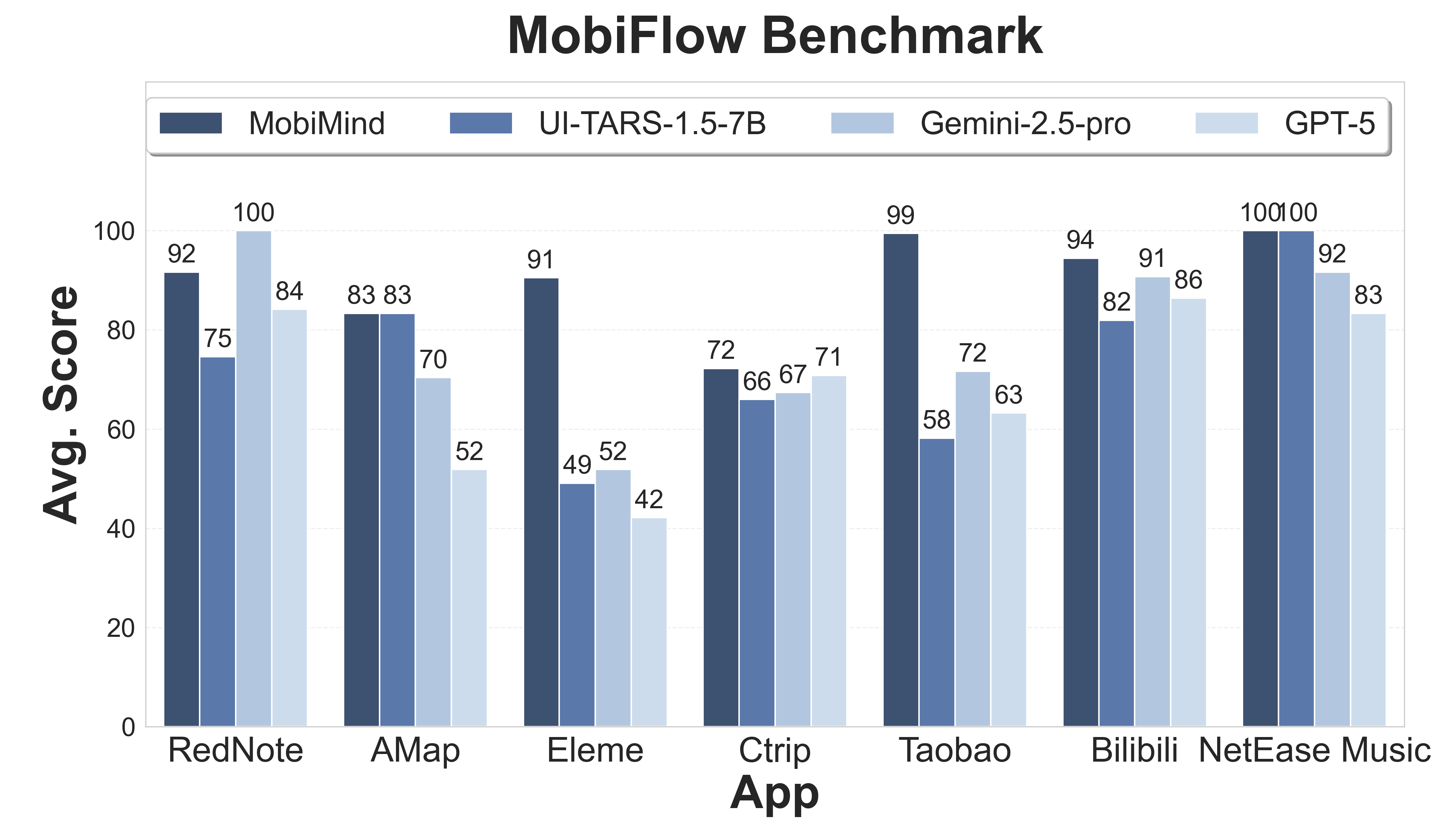

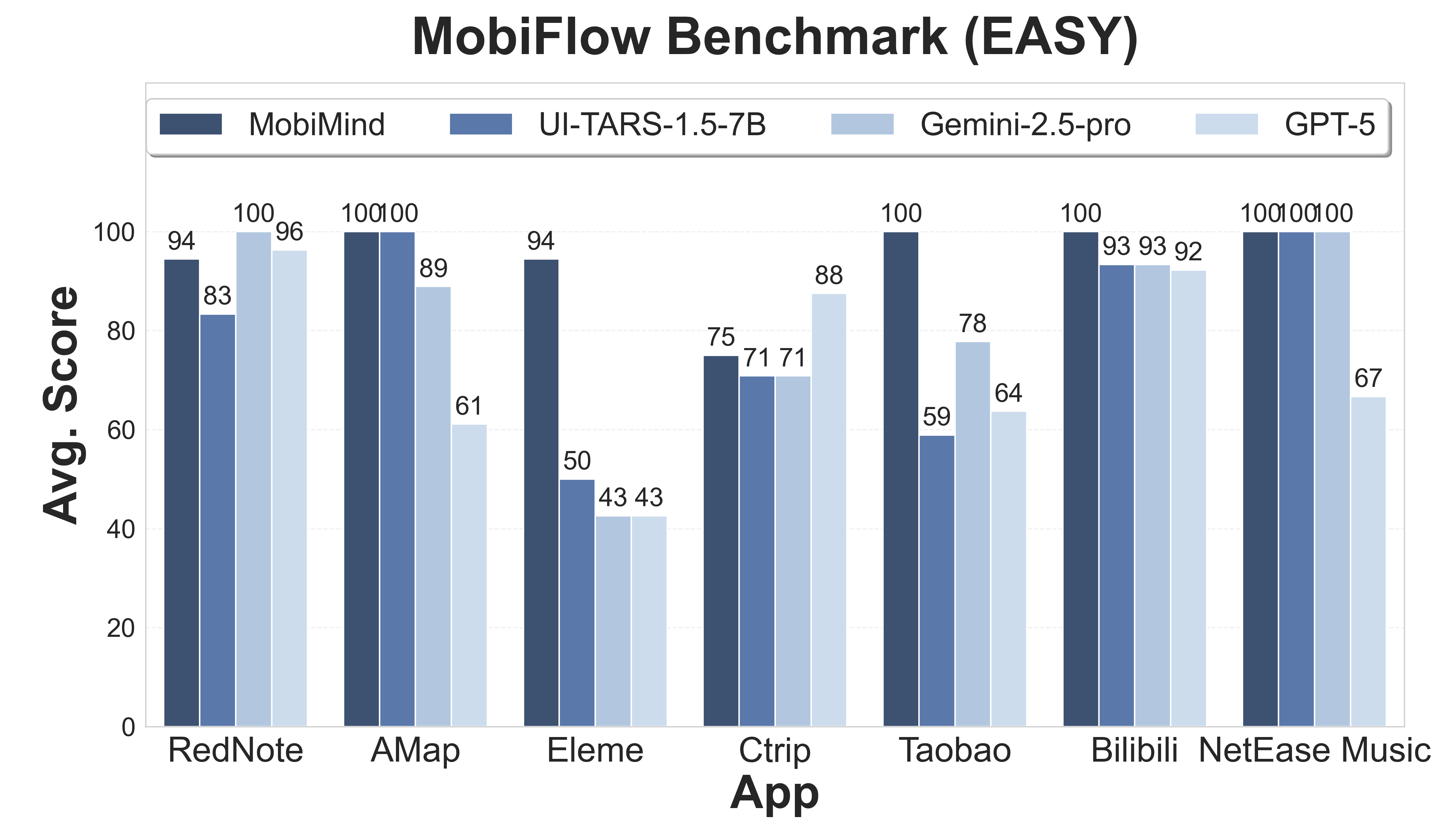

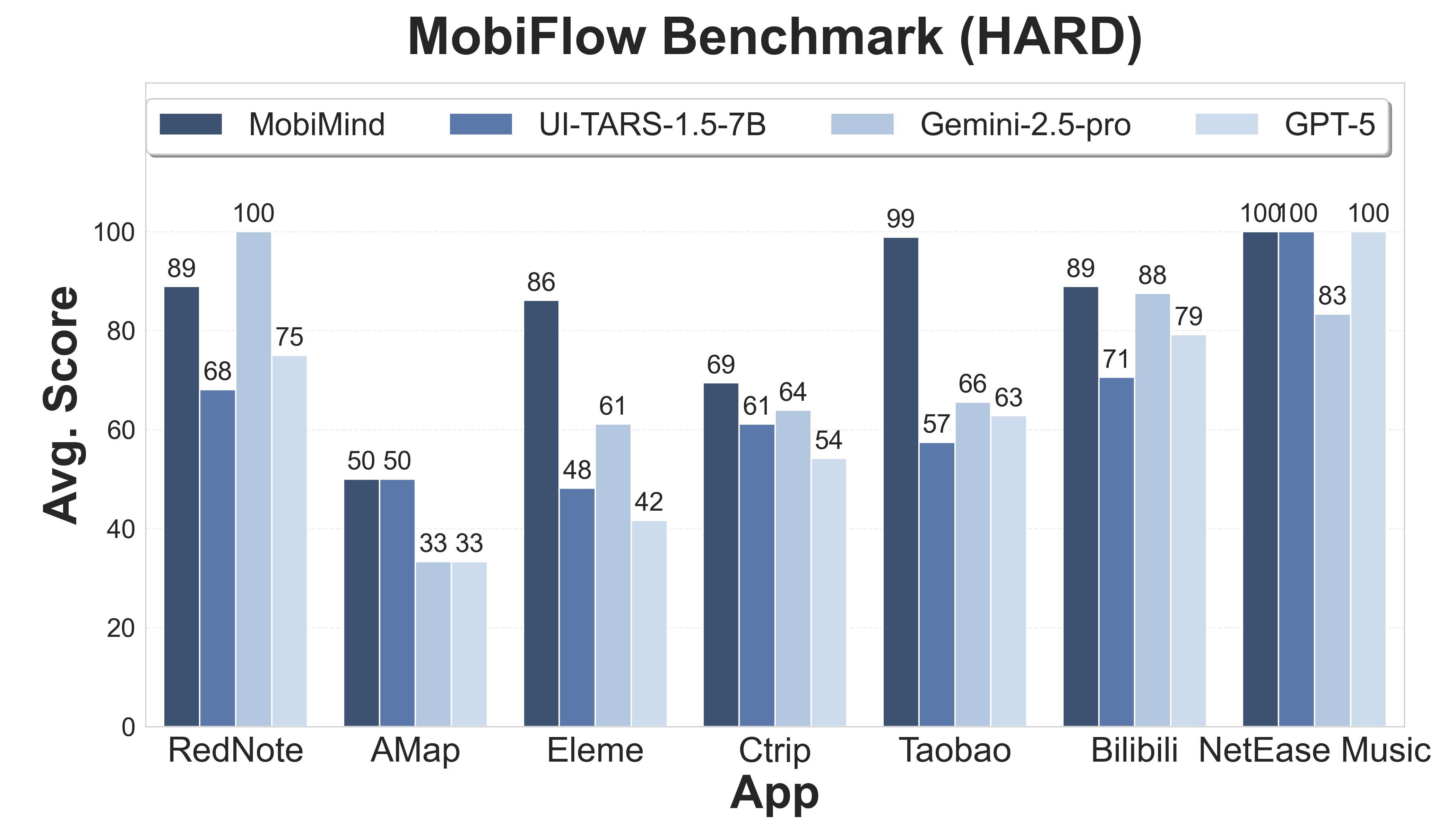

With the rapid advancement of Vision-Language Models (VLMs), GUI-based mobile agents have emerged as a key development direction for intelligent mobile systems. However, existing agent models continue to face significant challenges in real-world task execution, particularly in terms of accuracy and efficiency. To address these limitations, we propose MobiAgent, a comprehensive mobile agent system comprising three core components: the MobiMind-series agent models, the AgentRR acceleration framework, and the MobiFlow benchmarking suite. Furthermore, recognizing that the capabilities of current mobile agents are still limited by the availability of high-quality data, we have developed an AI-assisted agile data collection pipeline that significantly reduces the cost of manual annotation. Compared to both general-purpose LLMs and specialized GUI agent models, MobiAgent achieves state-of-the-art performance in real-world mobile scenarios.

About MobiAgent

MobiAgent is a powerful mobile agent system including:

- An agent model family: MobiMind

- An agent acceleration framework: AgentRR

- An agent benchmark: MobiFlow

System Architecture:

Evaluation Results

|

|

|

Usage

Deploy model inference service with vLLM:

vllm serve IPADS-SAI/MobiMind-Mixed-7B

It simultaneously serves as the Decider and the Grounder, i.e., the requests for both tasks can be routed to this model.

For more usage details, e.g., execute GUI tasks with ADB or our Android App, please refer to our repo!

- Downloads last month

- 9